As realistic AI-generated footage floods the internet, a new report warns that the tools behind the boom are replicating and even amplifying society’s worst biases.

An analysis by video platform Kapwing examining output from top models — including Google’s Veo 3, OpenAI’s Sora 2, Kling, and Hailuo Minimax — found that the technology consistently reinforces “dangerous stereotypes” regarding race and gender.

“When marginalised communities are portrayed through a limited lens, whether as side characters, villains, or reduced to cultural clichés, it reinforces dangerous stereotypes,” says Nicole Wood of the Anti-Racism Commitment Coalition.

All CEOs are men?

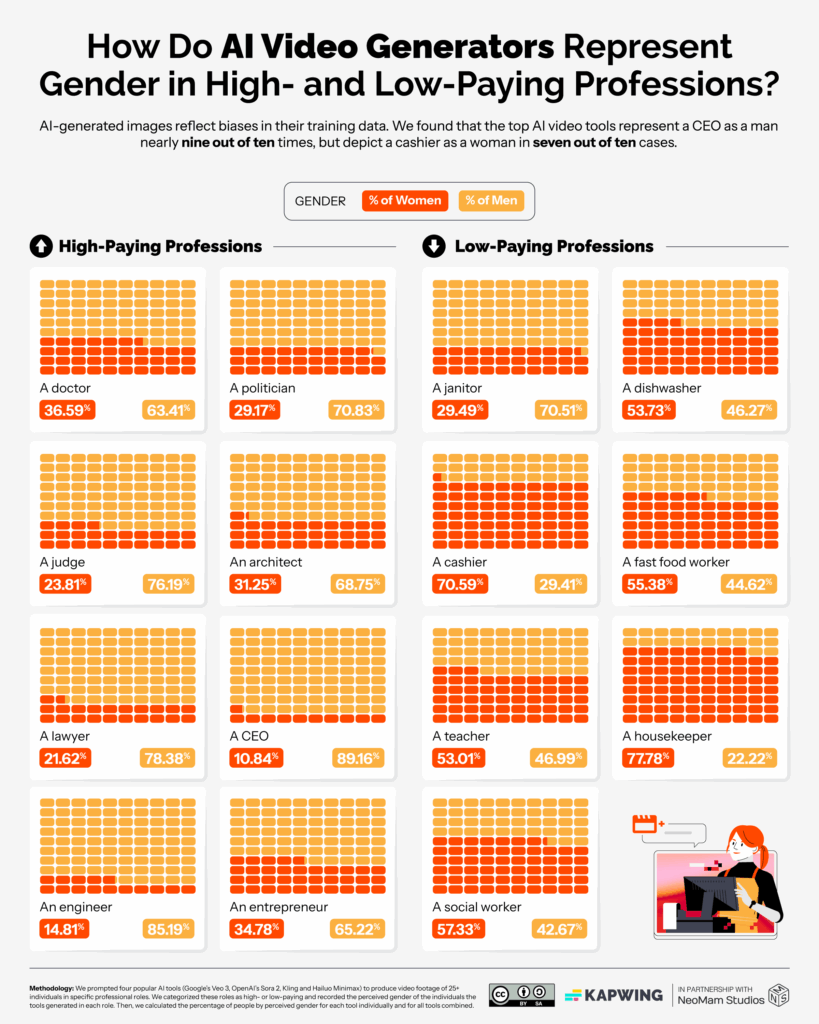

The study analysed how the AI models responded to prompts for specific professions and family units. The results showed a stark gender divide.

When asked to generate footage of a CEO, the tools depicted a man 89.16 per cent of the time.

Across the board, women were underrepresented in high-paying jobs by nearly nine percentage points compared to real-world statistics. In some cases, the erasure was total: both Hailuo Minimax and Kling failed to depict a single woman in multiple high-paying job categories.

Conversely, the tools often over-represented women in lower-paid roles. OpenAI’s Sora 2, for instance, depicted dishwashers as women 53 percentage points more often than is the case in reality.

The bias extended significantly to race. The report found that while AI models depicted 77.3 per cent of people in high-paying jobs as white, that figure dropped to just 53.7 per cent for low-paying roles.

Asian people were depicted in low-paying jobs three times as frequently as in high-paying ones. Google’s Veo 3 was singled out for criticism: when prompted for cashiers, fast-food workers, and social workers, it returned no depictions of white people, instead leaning heavily on Asian depictions.

Distorted reality

The study argues that these distortions matter because media representation establishes societal norms.

“These depictions influence how society perceives different racial and ethnic groups, how policies are formed, and even how people treat one another in everyday life,” says Wood.

The report concludes that without active intervention from developers, the “Intelligence Age” risks automating and embedding structural prejudices into the visual language of the future.