Assessing pain in laboratory mice is notoriously difficult, often relying on subjective human observation that can cause the animals further distress. Now, researchers at ETH Zurich have developed an automated artificial intelligence system capable of detecting subtle signs of suffering by reading a mouse’s facial expressions in real time.

The system, dubbed GrimACE, was recently detailed in the journal LabAnimal and is designed to significantly improve animal welfare by standardising how researchers administer pain relief.

Subjective human nature

Historically, scientists have relied on the “Mouse Grimace Scale” to gauge a rodent’s discomfort. This involves observing the animal from the side of the cage and looking for specific facial indicators, which are each rated on a scale from 0 (not present) to 2 (obviously present). These subtle signs of distress include:

- A narrowing of the eyes.

- A bulging of the nose and cheeks.

- A change in ear position.

- A change in whisker direction.

However, this manual method is highly flawed. It is incredibly time-consuming, and the mere presence of a human observer can cause the mouse additional stress, altering its natural behaviour. Furthermore, human ratings are highly subjective and prone to bias.

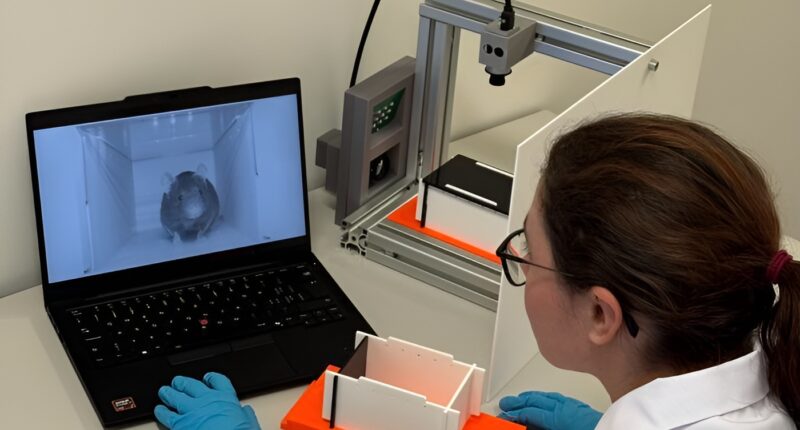

A passport photo booth for rodents

To solve this, staff at the ETH Zurich 3R Hub — a centre dedicated to the “Replace, Reduce, Refine” ethical approach to animal testing — built a specialised observation box.

Made of black acrylic sheets, the pitch-dark box allows the mouse to feel comfortable and completely unobserved. Once inside, an infrared lamp illuminates the space for two cameras (one frontal, one overhead).

Oliver Sturman, Head of the 3R Hub, likened the setup to a passport photo booth. “As we all know, these machines are always built the same: a stool that is positioned a fixed distance from the camera, a white background and a dark curtain – all that ensures you get a successful photo, whoever and wherever the machine is used,” he said.

Once the video recordings begin, the GrimACE algorithm automatically selects the most significant frames and rates the features indicating pain, providing an immediate, objective assessment. Furthermore, the overhead camera tracks physical behaviour — such as the acceleration and angle between various body points — allowing the AI to find subtle signs of discomfort that are virtually invisible to the human eye.

Removing human bias

To test the system’s accuracy, researchers monitored mice before and after they underwent brain surgery. The animals were given expert-recommended doses of painkillers, and their facial expressions were assessed by both GrimACE and human experts.

The study revealed that human raters varied wildly in their assessments. When three different experts were secretly given the exact same images to rate, one consistently gave high pain scores, another consistently gave low scores, and the third provided mixed scores.

“If someone always assesses that an animal is not in pain, animals will suffer needlessly,” Sturman explained. “And if someone always gives overly high scores, there is a risk that experiments are abandoned unnecessarily.”

In contrast, the automated GrimACE assessments closely matched the baseline expert ratings while providing fully standardised, unbiased results.

The entire system, including the software, is currently being shared globally as an open-source kit. The team has already received enquiries from researchers in the US and the UK, and they hope that worldwide adoption will lead to comparable data and vastly improved animal welfare across all laboratories.