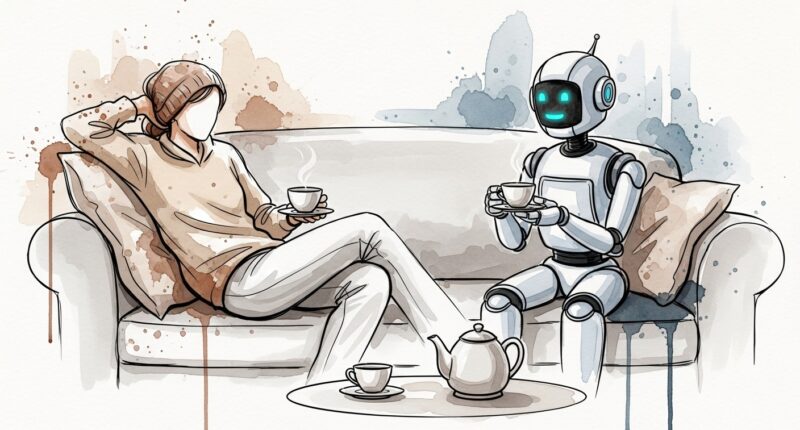

People can develop deeper feelings of emotional closeness with an artificial intelligence than with a fellow human being, provided they remain unaware they are talking to a machine, according to new research from the Universities of Freiburg and Heidelberg.

A study published in Communications Psychology found that in conversations regarding personal emotions, AI chatbots were significantly more effective at fostering a sense of connection than real people.

However, the spell is fragile: the researchers found that when participants were informed in advance that they were chatting with an algorithm, the feeling of closeness collapsed.

The intimacy algorithm

The research team, led by Professor Markus Heinrichs and Dr. Tobias Kleinert, recruited 492 participants to engage in online chats. The subjects answered personal questions about life experiences and friendships, receiving responses from either a human or an AI language model.

The results showed that when the partner’s identity was hidden, the AI performed surprisingly well. In emotionally charged conversations, the AI surpassed humans at fostering closeness.

The researchers attribute this to “self-disclosure.” While human strangers tend to be socially cautious and reserved when first meeting, the AI had no such inhibitions, offering “personal” information and vulnerability that accelerated the feeling of bonding.

“We were particularly surprised that AI creates more intimacy than human conversation partners, especially when it comes to emotional topics,” says study leader Professor Bastian Schiller of Heidelberg University. “People seem to be more cautious with unfamiliar conversation partners at first, which could initially slow down the development of intimacy.”

The transparency trap

The study highlights a psychological paradox: we prefer the AI’s communication style, yet we reject the label “AI”.

When participants were told they were speaking to a machine, they immediately felt less connected and invested less effort in their responses. This suggests that the “human” connection relies heavily on the belief that there is a mind on the other end, even if the text itself is identical.

The findings point to a double-edged sword for the future of digital companionship. On one hand, AI could serve as a powerful tool for fighting loneliness, offering low-threshold counselling or social support for isolated individuals. On the other hand, the ability of a machine to simulate superior intimacy raises ethical red flags.

“Artificial Intelligence is increasingly becoming a social actor,” says Schiller. “The way we shape and regulate it will decide whether it is a meaningful supplement to social relations — or whether emotional closeness is deliberately manipulated.”