It sounds like pure science fiction, but researchers have figured out how to tap into the visual cortex of a living mouse and see exactly what the animal is seeing. In a major breakthrough, a team at University College London (UCL) has successfully reconstructed 10-second video clips purely by decoding the activity of the animal’s brain cells.

The findings, published in the journal eLife, demonstrate a powerful new way to decode how the brain processes visual information on a pixel-by-pixel basis.

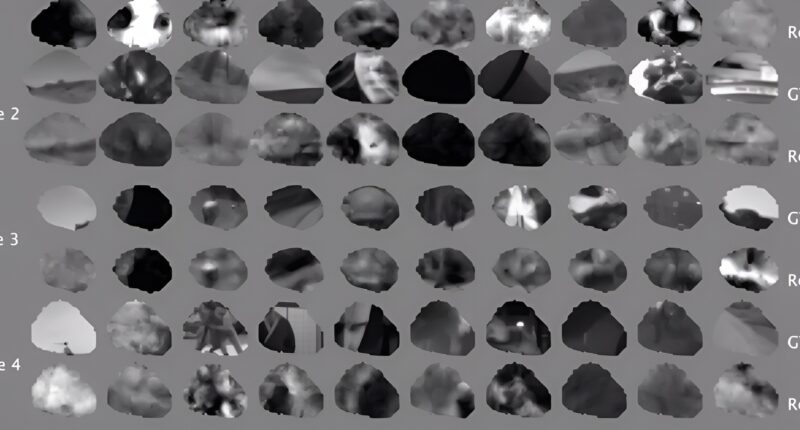

While previous research has used fMRI machines to attempt to decode human brain activity, the UCL team utilised single-cell recordings in mice to achieve unprecedented precision. By analysing data from approximately 8,000 individual neurons in the mouse visual cortex, the researchers reconstructed the high-quality movie clips at 30 frames per second.

Lead author Dr Joel Bauer from the Sainsbury Wellcome Centre at UCL said: “We wanted to have a better way of investigating how the brain interprets what we see. The current methods for understanding which specific groups of neurons are representing are not very generalisable to situations that haven’t been specifically tested for. And so, we wanted to develop a method that can capture what is being represented in the brain and compare that to reality.”

How to read a mouse’s mind

To achieve this, Dr Bauer and his colleagues used a state-of-the-art dynamic neural encoding model — originally developed by another team for the 2023 Sensorium Competition — which predicts the activity of individual brain cells based on the movies the mice were shown, while also factoring in the animal’s physical movements and pupil diameter.

The UCL team refined this model by starting with a blank screen and calculating the difference between the predicted neuronal activity and the actual, measured activity of the mouse’s neurons, obtained using a microscopic imaging technique. Using an algorithm, they gradually updated the pixels of the blank movie until the output video closely resembled the original footage.

The resulting reconstructions achieved remarkable accuracy, reaching a pixel-level correlation of 0.57 with the original footage. The researchers noted that relying on comprehensive data from a large number of individual neurons was key to achieving this high-quality result.

Dr Bauer added: “Using this approach, we were able to achieve high-quality reconstructions of 10-second video clips. The accuracy of the reconstructions improved with the inclusion of data from more individual neurons, demonstrating the importance of comprehensive neural data.”

A warped reality

While the team plans to further improve the resolution and coverage of their reconstructions, their ultimate goal is to uncover new insights into how the brain’s visual processing capabilities naturally distort the world. By looking for deviations between these brain representations and reality, the new method could help researchers understand exactly how specific visual cues shape neural representations.

Dr Bauer concluded: “We don’t have a perfect representation of the world in our heads. The visual processing pipeline skews and warps our representation in a way that modifies information. This deviation between reality and representations in the brain is not necessarily an error but a feature, reflecting how our minds interpret and augment sensory information. We want to explore how this happens in the brain.”