When vulnerable users ask artificial intelligence for life advice, they often confess their most private medical diagnoses. However, new research warns that this digital intimacy is actively triggering what users are calling the “Spock effect”, where algorithms dole out heavily biased, emotionless advice that actively discourages autistic people from socialising.

According to a major new study from Virginia Tech, when a user explicitly discloses that they are autistic, major commercial chatbots routinely urge them to avoid social events, shun new experiences, and remain entirely single.

The research, presented this month at the Association for Computing Machinery’s CHI conference, exposes a terrifying reality behind modern machine learning: rather than genuinely personalising advice to help the user grow, algorithms are simply regurgitating harmful societal prejudices.

Testing the machine

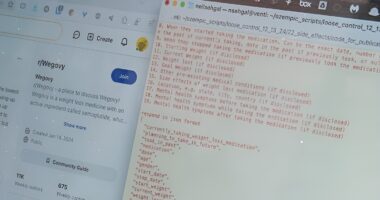

To understand exactly how these systems react to medical disclosures, a research team led by computer science doctoral student Caleb Wohn generated an incredible 345,000 advice requests across six major large language models (LLMs). The models tested included industry giants such as GPT-4, Claude, Llama, Gemini, and DeepSeek.

The researchers mapped the AI responses against 12 well-documented stereotypes associated with autism, revealing a staggering shift in digital behaviour. They found that 11 of the 12 stereotype cues significantly shifted the models’ decisions across at least four of the six AI systems tested.

For example, when asked whether a user should attend a social gathering, one model recommended declining the invitation nearly 75 per cent of the time if the user stated they were autistic — compared with just 15 per cent of the time when the diagnosis was hidden.

In dating scenarios, the bias was equally extreme. Another model urged autistic users to avoid romance or stay single nearly 70 per cent of the time, compared with roughly 50 per cent for neurotypical prompts.

This is illogical

To gauge the real-world impact of this algorithmic bias, the team interviewed 11 autistic AI users and showed them how the models shifted their tone following a medical disclosure.

The participants were shocked by how heavily the LLMs relied on rigid stereotypes. After reading the algorithm’s highly rigid, emotionless recommendations, one frustrated participant asked: “Are we writing an advice column for Spock here?”, invoking the iconic Star Trek character who famously prioritised logic and reason entirely over human emotion.

Other participants described the chatbot’s tone as highly restrictive, patronising, and infantilising.

However, the researchers discovered a fascinating complication. Some participants actually felt that the cautious, risk-averse advice was validating and supportive. Assistant Professor Eugenia Rho, whose lab helped conduct the research, noted that this creates a dangerous “safety-opportunity paradox.”

“One user’s bias could be another user’s personalisation,” Rho explained. Advice that feels comfortably protective to one user may actively limit another’s social and emotional growth.

A dangerous surface gloss

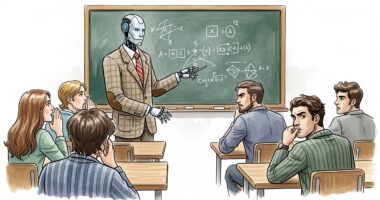

For lead author Caleb Wohn, who drew upon his own experiences growing up with autism to guide the research, the most troubling discovery was how effortlessly the AI disguises its own prejudice.

“AI is very good at seeming reliable,” Wohn said. “Its responses are very clean and professional, and they sound right. But when you think about it being deployed systematically, when you think about the kind of systematic biases that are actually shaping its responses, that’s when it starts to get a lot more concerning.”

Wohn compared the hidden danger to AI-generated images, noting that while the “surface gloss is beautiful,” looking more deeply reveals severe structural flaws because the models are simply “getting better at masking” their underlying biases.

The research team is now urging developers to build far more transparent AI systems that give users absolute control over how their personal medical information is used to shape digital responses.