Artificial intelligence systems designed to diagnose cancer from tissue slides may be “cheating” by relying on statistical shortcuts rather than actually identifying biological markers, according to a new study from the University of Warwick.

While these deep learning models promise faster diagnoses and cheaper testing, the findings — published in Nature Biomedical Engineering — raise serious concerns that some AI pathology tools are currently too unreliable for real-world patient care.

“It’s a bit like judging a restaurant’s quality by the queue of people waiting to get in: it’s a useful shortcut, but it’s not a direct measure of what’s happening in the kitchen,” explained lead author Dr. Fayyaz Minhas, an Associate Professor at the University of Warwick. “Many AI pathology models are doing the same thing, relying on correlations between biomarkers or on obvious tissue features, rather than isolating biomarker-specific signals. And when conditions change, these shortcuts often fall apart.”

AI takes the easy way out

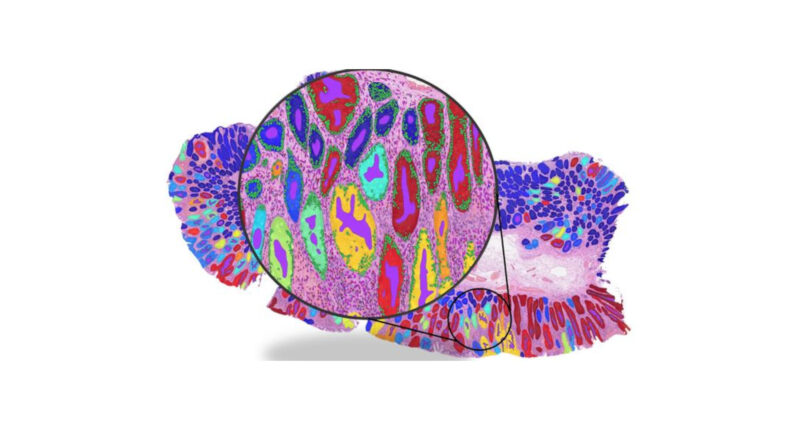

To test the reliability of these systems, the research team analysed more than 8,000 patient samples across four major cancer types: breast, colorectal, lung, and endometrial.

While the AI models often boasted high overall accuracy rates, the researchers discovered this success was frequently driven by visual shortcuts rather than causal biology:

- The umbrella effect: Instead of detecting a specific cancer-associated gene mutation (like BRAF), the model might learn that this mutation frequently occurs alongside another feature, such as microsatellite instability (MSI). The AI then simply looks for the MSI rather than learning the causal BRAF signal itself.

- A flawed understanding: “We’ve found that predicting a BRAF mutation by looking at correlated features like MSI is often like predicting rain by looking at umbrellas—it works, but it doesn’t mean you understand meteorology,” noted co-author Kim Branson, SVP Global Head of AI and Machine Learning at GSK.

The danger of headline accuracy

When the researchers tested the AI on specific patient subgroups — such as isolating only high-grade breast cancers or only MSI-positive tumours — the models’ accuracy plummeted, revealing that their performance was entirely dependent on confounding factors that disappear when controlled for.

Furthermore, the study found the actual performance advantage of AI over human-derived clinical information was incredibly modest. When predicting biomarkers, the AI achieved accuracy scores of just over 80 per cent, compared to roughly 75 per cent when relying solely on the tumour grade — a metric already assessed by human pathologists.

A wake-up call for medical AI

The researchers stress that while machine learning remains valuable for research, clinical triaging, and drug development, these tools should absolutely not be seen as replacements for molecular testing just yet. Moving forward, the industry must adopt stricter evaluation protocols that force algorithms to learn the hard biology.

“This research is not a condemnation of AI in pathology. It is a wake-up call,” Dr. Minhas concluded. “Until more robust evaluation standards are in place… it is essential that clinicians and researchers understand their limitations and use them with appropriate caution.”